Exploring How Users Discover and Use the Tool

We recruited 8 employees, most of whom had prior experience with AI tools like ChatGPT or Copilot. Their common use cases included editing writing, reviewing documents, searching for information, and planning tasks — giving us a strong baseline for evaluating expectations.

First, users were given a description of the tool and asked where they expect to find it and what they expected it to be called. Users were then given tasks using the tool (uploading documents, switching views, and resizing) and asked for their feedback.

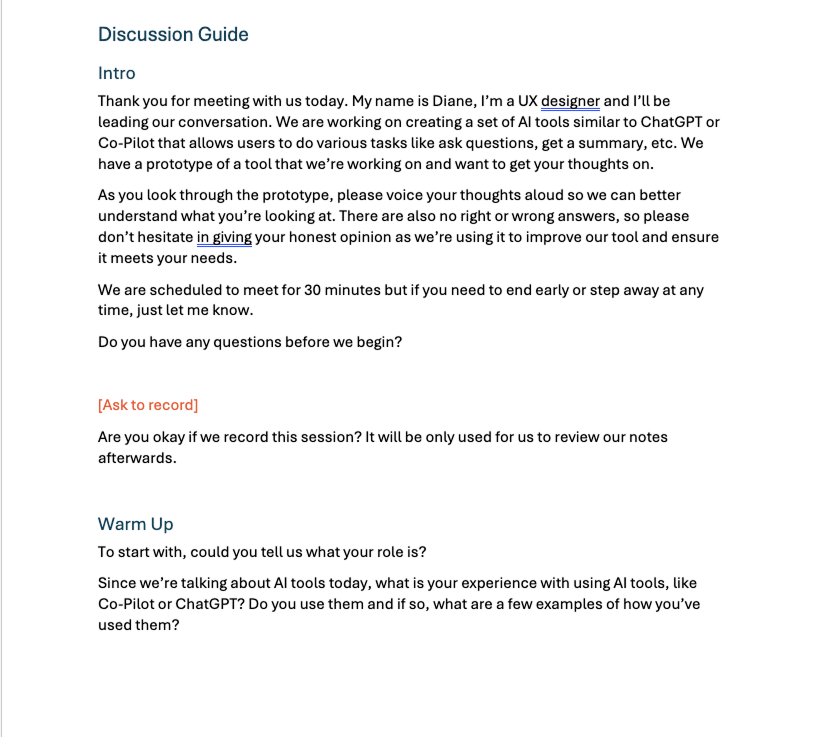

Research script used during sessions.

Example usability test session with a participant.