Evaluating the Voice Experience

usability testing

I recruited 10 participants and ran two rounds of remote moderated usability testing over five weeks. Participants performed tasks using the voice bot on mobile to simulate in-car use and were asked follow-up questions to understand their experience. I also asked about prior experience with voice assistants to understand expectations coming in.

Remote moderated sessions — participants interacted with the voice bot on mobile to simulate in-car conditions.

Example Usability Tasks

-

01

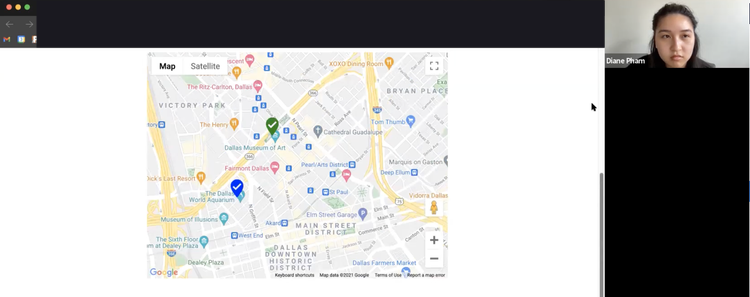

Basic Destination Request"Imagine you are out driving and want to go to the nearest Starbucks. How would you ask the Destination agent for directions?"

-

02

Mid-Route Destination Change"After getting directions to Starbucks, you also want to get directions to the nearest Target. How would you perform this task?"

-

03

Escalation to Live Agent"Imagine you are asking for directions to Starbucks and the Destination assist is not working as expected. What would you say in order to get help from a live agent instead?"

Conversation flow diagram mapping the bot's decision paths, fallback states, and escalation logic.